Who We Are, and What We Do

Rival is developing a solution catered to solving the toughest cybersecurity challenges and time sinks, freeing human security teams to engage in the creative and proactive defense.

Rival’s autonomous platform rests on a huge collection of domain-specific approaches that push boundaries of agentic performance on security-related tasks.

Beyond these, Rival also implements generic advancements in LLM reasoning techniques, developed in-house by our leading cyber and machine learning experts to be scalable, trustworthy, and shatter existing analytical benchmarks.

The Challenge of Reasoning

On our path from research to productization, we came face-to-face with the toughest hurdles that agentic systems face in widespread adoption for real-world enterprise use cases.

Chief among these challenges is a simple fact - today’s agentic approaches excel at toy examples and trivial problems, but their reasoning falls apart when approaching problems that human reasoning faces in the corporate world.

To illustrate this, lets take a look at a more general task than cybersecurity - corporate data analysis.

All large companies hold vast datasets and have human analysts responsible for mining that data to extract critical business insights.

Translating natural language queries to executable analytical logic (like SQL) is one of the standard benchmarks to measure the effectiveness of different approaches for logical reasoning over complex data.

The Spider(man) Benchmark - What It Is, and Why It Is Relevant

A standard used to measure this are the “Spider” family of text-to-sql benchmarks. On first glance, things look good for LLMs: Spider 1.0’s leaderboard reports that state-of-the-art agents achieve above 90% accuracy in translating natural language analysis questions to verifiable answers backed by SQL!

Things start to fall apart when you examine the details of the benchmark itself, however. The dataset consists of challenging questions such as:

The problem is obvious: Spider 1.0 contains the same kind of trivial, toy examples we mentioned above.

The folks at Spider realized that too, and set to work creating a new coliseum in which to test the juggernauts or artificial reasoning - Spider 2.0.-- For each customer, calculate their daily balances for every day between

And, what do you know, the numbers go down - hard. Spider 2.0-lite’s leaderboard has the best approach achieving only 37% on these kind of analytical tasks. Approaches that achieved above 80% in Spider 1.0, like DailSQL, achieve only 5.68%!

Looking at Spider 2.0’s questions, we immediately see that they are significantly harder, and much better represent the kind of real world questions an AI agent would have to reason through. Case in point:

The specifics of questions that next-generation cyber platforms need to answer are different than the wide spectrum of subjects in Spider, but their complexity is similar. Take this example adapted from one of our customers:

Obviously, a 30% success rate in this case might as well be 0%. If an agent can’t reliably carry out this task, our customers cannot and will not use them at all, keeping security teams preoccupied with the copy-and-paste tasks necessary to perform this analysis manually.

Rival’s Approach - Orchestrating Workflows

We started at a disadvantage - not only do we have to break these existing benchmarks, we have to do it in a way that is trustworthy and can operate at scale. We realized that a fixed agentic design would not answer these criteria.

Taking inspiration from cutting-edge academic approaches, Rival’s core reasoning approach implements a two-stage process: workflow generation and workflow orchestration.

See this

Generation

When Rival is first given the description of an analytical workflow, it breaks down the described problem into discrete logical steps that solve the problem. The operations allowed in each step is definable and extensible by the user - such as SQL queries, python code, free-form agentic reasoning, and so on.

This is a similar approach to prompting techniques that have seen success in sterile academic environments, such as Plan-and-Solve and Skeleton-of-Thought.

Each logical step is created with well-defined output schemas, as well as inputs that link to the outputs of other steps. Together, this allows Rival to generate plans with complex interdepencies while still being able to ensure that each individual piece of logic is well-defined.

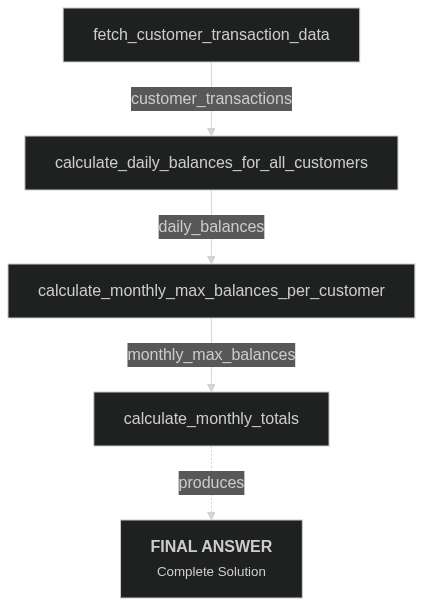

These can be relatively simple - this is the generated flow for the above Spider2 question regarding bank customers:

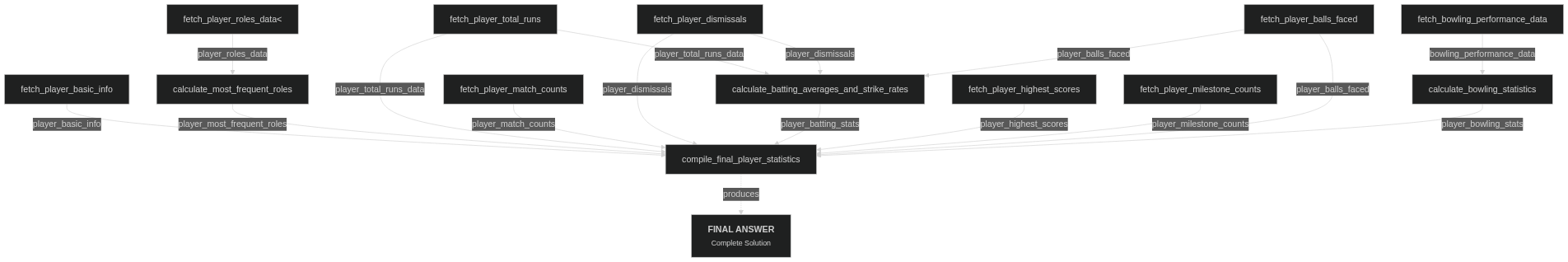

Or they can be quite complicated, such as when asked to summarize complicated aggregation statistics on cricket players that are distributed throughout multiple different tables:

Composition and Orchestration

Once a plan has been constructed, Rival has to implement the individual steps.

Each of these steps could be any number of “functions” that have fixed input and output schemas: SQL queries, Python/typescript code, or even on-the-fly subagents!

Large amounts of research has demonstrated the effectiveness of in-context few-shot prompting - where examples of correct behavior are given to the model at generation time.

Given that the plan is a DAG, Rival is able to implement the steps “in order”. Outputs of prerequisite steps are generated and “previewed” to the steps that depend on them, while still enabling full parallelization of independent steps for speed.

Rival also enforces the output schemas of each step. This acts as another guardrail against insidious logical errors and hallucinations, and empirically serves to “remind” Rival of how each individual step’s logic fits into the bigger picture.

Once orchestration is complete, a complete final answer has been fully assembled. However, assuming that a correct workflow has been composed, the same workflow can be run repeatedly to generate up-to-date answers to the same question, without requiring any additional inference.

Benchmarking Rival

Overview of the Technique

In order to test the flexibility and power of our approach, we created a slimmed-down version of our core logic. We stripped away the cybersecurity-specific logic, simplified some of the code generation capabilities, and rewrote some of the agent prompts to make more sense in the context of the benchmark.

We then gave this version of Rival access to the SQLite portion of the Spider 2.0-lite (not the Snowflake or Bigquery hosted components) benchmark, and asked Rival to solve each of the benchmark’s questions.

Given the potential for Rival to use code as well as SQL queries solve the problem, we would often receive answers that were semantically correct, but with additional information or in a schema that did not match the tabular golden outputs of the benchmark exactly.

To properly grade Rival’s performance, we inspected the outputs for semantic identity - programmatically, using a critic LLM, and with a final manual pass. We categorized each of Rival’s outputs into one of the following categories:

Semantically identical (potentially with additional information)

Semantically identical, but with rounding mismatches (3.3 and 3.333333 are the same value, right?)

Semantically identical, with an alternate valid interpretation of the initial prompt.

As an example, some challenges asked users to be distributed into buckets based on ranges of months. Rival identified these buckets by their end month, while the benchmark identified them by their start month. The provided question didn’t specify a single “correct” method, so Rival gets points for this.

Not identical (an error)

Results

So, how does this match up to the existing Spider 2.0 leaderboard (Taken from here on 19/6/25)?

It blows existing approaches out of the water. Rivalbot successfully answers nearly 63% of the challenge successfully.